In Fly swatter, I wrote that the first implementation of ‘Schrödinger’s Honeypot’ carried an inherent potential for Denial of Service, since it blocked the IP address for a whole day on every single violation.

Denial-of-Service

Over the following evenings, I gave some thought to how this problem could be addressed: How do you solve the dilemma of keeping scanners off your back on one side, while keeping as few legitimate users away as possible on the other? And, taking it one step further: how do you prevent someone from making a sport out of abusing the detection component to block access for everyone else behind a NAT gateway? It’s relatively easy to figure out what the detection component is looking for — just try a few well-known scanner patterns. When the lights go out, you’ve found a pattern.

Why?

Before I go on, let me answer one question up front. Do I actually need all this? Probably not. I went down this path out of curiosity. My current suspicion is that, given the traffic volume my blog has, the mechanism I’m presenting here consumes more resources than the webserver itself. The complexity is probably grotesque. After all, it just serves static pages. Sendfile on incoming requests to port 80. Just about the simplest thing a server on the internet can do. But with dynamic sites, the picture might already look different. What actually bugged me was that my Apache log file was full of scan attempts. For me, all of this is primarily a hypothesis-tester. I had a few hypotheses about this scanner crowd before I implemented this, and I’ve picked up a few more from the process, which I’m in turn now testing.

I haven’t finished implementing everything yet. I’ll say up front: large parts of this were written for me by an LLM based on a mile-long specification. I couldn’t have gotten this prototype up and running this quickly on my own. I haven’t done the exact math, but in the time since the idea for Schrödinger’s Honeypot came to me, I wouldn’t have been able to type it all out by hand.1 To put it mildly, the process of building this was at times like backing up a car with a trailer. Look away for a second and everything has veered off in the wrong direction.2 This was exhausting.

The reason I let myself in for this is that without it I wouldn’t have had the time to build it, but above all because this mechanism has no interface of its own facing the outside. Everything that flows in from the outside goes through Apache. It consumes log files. Aside from that, it makes DNS and HTTP requests to a service. I think the risk is manageable, at least for now and at least for me.

Architecture

Essentially, the mechanism consists of a series of daemons that supply data and in some cases pre-process it, plus one daemon that takes action based on that data.

The daemons listen exclusively on 127.0.0.1. I didn’t want to bolt TLS onto this. It’s a hypothesis tester, after all. And all you can query through these daemons is information — “how often have you seen this IP in the honeypot over the last 24 hours?”, “is this IP in an autonomous system worth blocking?” If an attacker can reach these ports, I’ve got a very different problem on my hands.

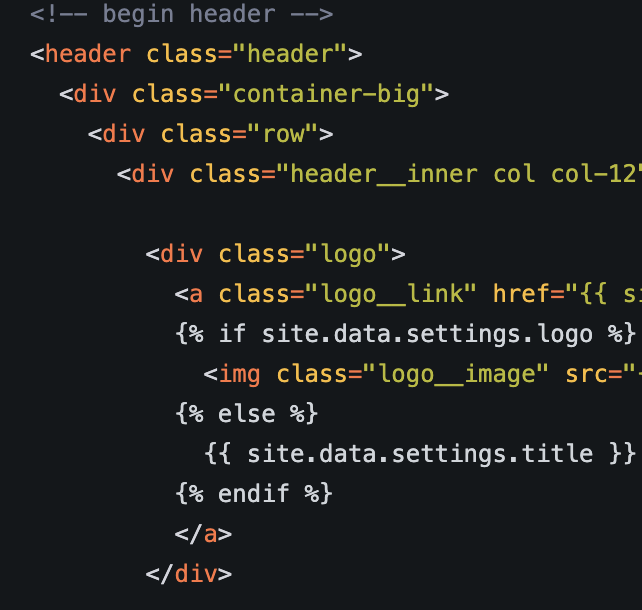

There’s no detection logic in these daemons; that’s handled by Apache itself through the mod_rewrite rules. Those rules determine what ends up going through this mechanism. It writes a log file that contains only accesses matching those rules. I’ve put a selection of these rules in a file derived from what I was seeing in my logfiles. As long as I’m still actively tweaking them, I’m keeping them in .htaccess so I can change them easily. Eventually, for performance reasons, they should move into the Apache configuration itself to avoid constant re-parsing.

In the end it turned into quite a Rube Goldberg machine. If anyone wants to blame the LLM for the wonky architecture: nope… that was me.

Approach

Back to the underlying question: How high is the collateral damage when I hand out a ban? Maybe the IP is a VPN gateway, a NAT device, maybe even CGNAT. Behind that IP there might not be just one user, but many. Only one of them scanned, but a ban locks all of them out of the blog for a day.

My first reaction to this was to block offenders for only 120 seconds. That stopped the really coarse mass scans, but most scanners came right back, others after an hour or so.

Something in between was needed. Not the orbital anvil, but not the flyswatter either. Maybe an orbital socket wrench.3

Rule zero is: you have to misbehave to come into contact with any of this. A regular user making proper use of the system isn’t looking for WordPress artifacts or web shells. It’s impossible for a legitimate user to trigger this mechanism. Nobody types in a scanner pattern by accident.

The first rule is probably the simplest: if you scan again within the 24 hours following a block, you get another 24 hours to wait. Until you’ve learned. FlySwatter decides this on its own. It’s the shortest path from the honeypot into the firewall rules.

Okay, the second rule checks whether the IP is a known cloud IP. The hypothesis is: it’s considerably more likely that someone — legitimately or not — has obtained the credentials of a cloud OS instance and is scanning from there, than that this is a NAT exit node, when I’m seeing clear signs of a scan coming from it. The risk of collateral damage is much lower than the probability of a scanner. This was the first test I implemented, which is also why my implementation lives under /opt/cloudcheck. If you come from a cloud, it’s off to the quiet corner for a day. The first collateral-damage problem already shows up here: VPN providers put their endpoints in exactly such clouds.

The third check works on the basis of the IP’s ASN. First, the responsible ASN is determined for the IP. That happens via Team Cymru’s IP-to-ASN mapping service. The result is checked against the Spamhaus DROP list — which I refresh regularly in the background — and against a local list. That local list contains ASNs like those of Hetzner, OVH, or Contabo. Contabo servers have proven to be repeat offenders in my logfiles. If this check decides you’re trouble, that also means a one-day block. Only the specific IP that misbehaved gets blocked. The whole thing isn’t meant as collective punishment. With those providers, you get a server with an IP number, and if you use one of those servers to scan, it’s pretty unlikely that regular users would also be coming through it. With one exception: What does worry me here are egress points of VPN providers hosted with these kinds of providers.

The fourth rule queries an external resource for the first time: AbuseIPDB. If you’re flagged as “bad” there with a certain confidence, that results in a one-day ban. It’s deliberately placed at the end. The number of requests to AbuseIPDB is limited in the free tier, and later on depends on how much you pay. The mechanism is meant to keep as many requests away from AbuseIPDB as possible.

The rule ordering follows a simple logic: from cheap to expensive. Rule 0 is a by-product of the web server, Rule 1 queries an internal in-memory state table, Rule 2 queries daemons that access a local dataset, Rule 3 makes a DNS query against Cymru’s offering (if it’s not cached) and cross-references it with the regularly refreshed DROP list and a manually maintained dataset of my own, and with Rule 4 we finally reach a service that is resource-limited.

And even though the current implementation works the other way around, the whole mechanism is actually thought from the opposite direction. I started from “I see you in honeypot.log, you’re out!” In essence, I started to look for reasons not to do it. “You’re from a cloud, I’m blocking you for that” also means “you’re not from a cloud, I’m not blocking you for that. But maybe for other reasons.” Blocking everything with a reputation score of 80 or higher also means not blocking things on the basis of the reputation rule if the reputation is lower than 80%.

Massive Retaliation

There’s another feature too: Group Bans. I’ve observed that some scanners don’t come from a single IP; instead an entire subnet lights up, with each individual IP sending only one or two requests. When I spot that in my log files, an entry goes into a groupban list that blocks whole groups of IPs at once. The first request to the honeypot then prevents 254 others (or more) from scanning. I have a rule to close off several subnets at once, because they tend to come all at the same time. They live, for instance, at a bulletproof hoster that doesn’t care where traffic comes from. These are manually added bans where I’m reasonably sure that no normal users are moving behind them. I can also use this group feature to hand out long-term bans or permanent bans.

Why 403 and filter?

Why both, actually? Why either one at all? Since I don’t have the resources of a WordPress on my box, every scan would just hit a 404. That said, the 404 page on my site — as I explained elsewhere — is pretty heavyweight, because it also hosts a Lunr-based search function with a pre-generated index. If a user arrives at my blog via a dead link, they should be able to check whether comparable information exists elsewhere. But even that barely causes any damage. It just fills up the log file. So why didn’t I just leave it at a 404?

Schrödinger’s Honeypot cheats in my implementation. A 404 would give the attacker the information that something is not there. A 403 communicates that something is forbidden. The difference is significant, because a 403 doesn’t reveal whether the thing exists. So the superposition, as far as the attacker is concerned, doesn’t just always collapse into a ban — they never had any chance of collapsing it into the other position either, because over there a different superposition is posing a different question: “present and forbidden = WordPress” or “not present and forbidden = no WordPress”.

Why the IP filtering on top? My ruleset only blocks patterns I know about, and in the same scan run it would hand over the requested information (there or not there) for unknown patterns. By blocking at the firewall level, unknown requests don’t even get far enough to reveal any information. Unless, by chance, they use a pattern unknown to me first, they don’t even get to learn whether that pattern is familiar to me (they could, of course, infer that it’s unknown to me from the 404 I would send, if it’s not specifically blocked by the rules).

Data Protection

There are several points in this solution where data protection questions come into play. In my opinion, there’s no better way to develop a migraine than to ponder the implications of data protection rules as a layperson. It might be different for lawyers. They probably look forward to it with delight, given its potential for “billable hours.”

My system queries AbuseIPDB about IP addresses, asking what reputation the IP has. Now, an IP address is explicitly PII under GDPR. I take the position that I have a legitimate interest in finding out the reputation of an IP that is engaged in non-intended-use behaviour (any only if).

And one question that preoccupies me: can an automated system whose purpose is to collect information about me itself have rights under GDPR? Especially considering that GDPR talks about natural persons? I understand that both regulations protect the person behind the IP, but is the person behind the IP of a VM running an automated process a natural person — namely, the one who owns the VM — or is it the company (and thus not a natural person) whose infrastructure hosts the VM? I don’t know. But then again, I’m not a lawyer.

But I have a more direct problem. One of my ideas that hasn’t been implemented yet needs the IP address of every request that hits my blog in order to collect successful requests of the last 24 hours. I’ve solved this problem as follows. I have a log file on disk where the IP address is pseudonymized by replacing the last octet with a 0. There is a second log area that carries a non-anonymized set of data. It’s considerably reduced — time, IP address, and result code (that’s all) — and this is piped to a daemon that keeps its information exclusively in main memory. This introduced two intentional problems for me: a restart means data loss, which in turn means that the function’s usefulness is limited for the next few hours.

More importantly, I can’t query information with the anonymized IP address. In the current implementation I need the exact IP. I could of course try all 254 IP addresses and thus de-pseudonymize a pseudonymized address, but that only works if (first) only one IP in that /24 subnet is the single user in question. If, within the entire 1.2.3.0/24 subnet, only one IP address (say 1.2.3.4) has 200-result-code data recorded for it, then it’s clear from whom the retrieval in the access log came, even though it was pseudonymized to 1.2.3.0. On one hand, GDPR does permit storing PII on the basis of legitimate interest; on the other hand, you really have to go out of your way to pull this off, and it only works under specific conditions. Full pseudonymization kicks in after 24 hours, in a way I don’t have to do anything for. I haven’t yet found a way to achieve immediate anonymization.

And if you want a really migraine-worthy question: is creating a firewall rule for a single IP itself a storage of PII?

(Side note of 23.04.2026: I solved the problem by turning the problem on the head, but more on that in a future blog entry)

Building Your Own

You can build this mechanism yourself. It would be pointless, though, to write the instructions into this blog post. You’ll find all the components along with instructions in my Codeberg repository.

The Flyswatter for Apache is available at https://codeberg.org/c0t0d0s0/flyswatter/src/tag/v0.01/flyswatter. The daemons Flyswatter needs for its work are available at https://codeberg.org/c0t0d0s0/flyswatter/src/tag/v0.01/cloudcheck-suite.

Should you build it on your own? Probably not.

One comment: The ASN check still uses the term BADASN. That’s an artifact from the time when only the DROP list was being compared against and so such ASNs were bad. At some point I added a second list, which I can populate manually, to flag names or ASNs as worth a day’s ban. I didn’t remove the BADASN term. A cosmetic issue. BADASN can simply mean “is from a cloud provider”. Pushing a reason through here that could also stand for CLOUDASN or DROPASN is already on my list to integrate.

Ideas Not Yet Implemented

There are some statistics I’m already maintaining, but not yet using, because I want to gather numbers first.

- Number of prebans in the last 24 hours. The idea is: if you’ve run into a preban, say, 19 times in the last 24 hours, you get a full day’s time-out on the twentieth.

- There’s another daemon that counts successful access attempts over a longer period. The assumption is: if I see many 200s from one IP, then the probability is high that there is more than one user behind that IP. In that case, the time-out is only 120 seconds — maybe I’ll extend that to 5 minutes when I implement it.

- It’s also being counted how often you stepped into the honeypot in the last 24 hours and how often you ran into the firewall block.

For some of these ideas there are already prototypes that haven’t yet made their way into the codebase, because they still need some fine-tuning before they’re even remotely usable. And I need much more data to know if those ideas are somewhat sensible.

Weakness

This is important if you really want to use this: honeypotd has a weakness. It also looks for requests containing a particular string in it. If that string is included, the IP of that request is considered your IP. The consequence in Flyswatter is that no firewall rule gets set. You can essentially use this to switch off the main function of this entire contraption. I therefore suggest treating the string like a password and keeping it equally complex. Important: it appears, of course, in log files, in the config file, and gets transmitted across the network. By the requests, but as a referrer as well. Maybe it’s not a bad idea, once everything works, to unset the string.

I’m aware that the magic-string hack I used to implement a half-dry-run of the logic is a security hole. If you know the string, you can bypass the whole mechanism. I built it this way because I don’t have a static IP and it made testing easier for me. I use a browser plugin that appends it to every request (and only those) for https://www.c0t0d0s0.org/. Actually, that plugin has a different job: I use it to filter my own requests out of the log files.

So why did I still build it this way? Because I locked myself out a few times. Because it was something that was already there.

There are some protections in place: First of all, the default is for the function to be switched off. There is no default. If the SELF_MARKER parameter isn’t set, then the “remember my own IP” function is disabled and there is no IP address that would only trigger a dry run. Second: What’s the possible damage? The requests in question aren’t just logged, they’re also answered with a 403. So more will be written to the log, and if other, unknown probes follow the initial known ones, those won’t be blocked. The damage is manageable, at least initially. There is no compromise of the security goals confidentiality and integrity, and with high probability — in my environment — also not of the goal “availability”. Second: in my environment there’s only one risk that the secret might leak to the outside, namely when someone follows an external link. In that case, the magic would appear in the Referer. Otherwise, the blog is HTTPS-encrypted, so the magic is invisible in-transit thanks to TLS encryption. And anyone with access to the log file can probably just switch off the whole kit and caboodle in my configuration anyway.

Otherwise, if you really want to use what I’ve built here (which please only do if you’ve examined the code yourself and found it fit for purpose), I would, after successful tests where you didn’t accidentally always block yourself, simply remove the magic completely. Then there’s no way left to switch off the test. That’s how I handle it now that I no longer need to test whether the reactive mechanisms work. If I need it again, I can switch it back on and restart the corresponding daemon.

Assessment

- The collateral damage problem isn’t finally solved in the current version, but it seems solvable to a large extend. Empirically, I can say that an IP that gets blocked has almost never shown significant 200s in the last 23 hours. That’s only a weak signal given my blog, though. I don’t have millions of hits. On the other hand, a peculiarity of my readership helps me out. I have well over 1000 subscribers, which was a very surprising number for me given how little has been happening on the blog for so long. These subscribers query the blog regularly and automatically, producing a steady stream of successful requests. If an attacker and a feed reader are behind the same IP, the attack is accompanied by a certain number of 200 requests. That can be used to mitigate the VPN egress problem.

- Here too, a signal you get for free — the feed-reader heartbeat — is being put to use. The feed readers, as the most loyal audience, produce their own heartbeat. Yes, sure, that could be exploited in turn.

- All of this could still be built with a sufficiently well-implemented Fail2Ban script. But I’d have had to build the helper daemons there too, so that the scripts in Fail2Ban would have something to lean on.

Observations

I’ve been collecting data for a few days now with increasing levels of detail. Pseudonymized. But sufficient to draw some conclusions. However, this is all data for one day — the day before yesterday (21 April 2026). It’s a private blog with relatively little readership. The conclusions are therefore to be taken with a big grain of salt.

A few things I noticed:

- Yesterday, 572 honey pot requests came through in 24 hours.

- They came from 427 IP addresses.

- That fits with the observation that scan runs are terminated fairly quickly.

- For me, scanning is primarily an IPv4 phenomenon.

- 11 IP addresses on the honeypot are IPv6.

- 416 IP addresses are IPv4.

- I still have to invest some time into improving the handling of IPv6.

- This resulted in a total stock of 149 entries in the firewall, which are now being blocked.

- In total, the firewall rules have rejected 6913 connection attempts in that time. Scanners rarely let themselves be deterred. Even when their connection attempts are slapped away with an RST, they keep trying again.

- Almost never is a scan attempt preceded by significant amounts of traffic with result code 200. That allows two conclusions:

- The collateral damage may have been small so far.

- For the scanners encountered so far, they don’t seem to use shared IPs. But to be honest, the data is insufficient for this conclusion to be more than anecdotal.

- The idea of checking, on a ban, whether legitimate traffic preceded the scan attempt could prove useful to avoid collateral damage.

- I still need to come up with something to prevent a scanner from simply switching off the protection by, say, requesting

/before the scan attempt. Perhaps a useful hypothesis here is that a mass scanner isn’t going to simulate the picture of a legitimate user for several hours in advance just to come back later and scan. At least not for a small target like my blog.

- I see relatively many scanners from an AS in Vietnam. Those, however, are all one-shot scanners. One request is made and the IP doesn’t show up again. At least not during the short measurement interval this article is based on.

- For the main goal of this project I need much more data. I think I will need a few weeks worth of data, to test the hypotheses I mentioned before.

- First data from a new mechanism, not described in this post (it will be in a future blog entry) suggests a near-zero false positive rate of this mechanism. However this is currently just anecdotal based on one day of data and could prove totally wrong.

- In 1200 runs of the mechanism with the fully implemented AbuseIP daemon in place, the mechanism was skipped in roughly a third of the cases. However this is not the full picture. The AbuseIP-Daemon caches and I didn’t put that information into my debug lines. Thus it’s entirely possible that further requests didn’t hit the service as it was answered by the cache. This could happen if a scanner isn’t blocked for a day, but just for two minutes, and appears multiple times of the day.

Outlook and Postscript

I’ve significantly expanded the concept and the script over the past few days. I may have found a solution for the PII problem that would allow breaking through pseudonymization for 24h. But all of that still needs more testing over the coming days. I will write about this in the next few days.

-

An idea that is so obvious that I’m sure it isn’t new. ↩

-

Without Trailer Assist. I simply despair at backing up with a trailer without this feature. It’s easier for me to unhitch and just push the silly thing. Since I only have a small trailer for the occasional trip to the garden waste disposal (vulgo: “Klaufix”), that works… ↩

-

And yes, I’m aware that this would probably burn up on the way to Earth. Let’s just assume a socket wrench made of unobtanium. ↩