Sometimes you have a good idea, implement it, and only afterwards realize that this idea has a denial-of-service attack built into it. And you ask yourself: Hmm, really a good idea?

I then moved away from the old solution and thought about what I could continue to use and what I needed to redesign.

The detection side remained intact. Requests matching known questionable patterns still trigger the reaction component. However, the reaction component is now built quite differently.

For the first iteration, I kept the reaction component very simple1.

- The source IP is blocked in the firewall for 120 seconds. It’s a rule with

reject with tcp resetand notdrop. - I remove the connection from the connection tracking via

conntrack. - I terminate the connection via

ss.

The first step prevents new connections for 120 seconds, while the other two steps actively sever the connection. A false positive no longer leads to blocking access for an entire day. The scanner should know that I don’t want it here.

Why do I use reject with tcp reset? People often use drop to conceal that something is there. But the scanners already know the web server exists — I don’t need to hide it from them anymore. And I’ll probably lose the race for resources anyway. So I convey the message that there’s a mechanism at work here that’s keeping an eye on them. “Listen, I just told you that you’re not getting through here, because I know what you’re doing.”

I consider tarpitting to be out of date. It worked when there was a balance of resources. Resources in the cloud paid for with stolen credit card information or started with stolen cloud credentials mean that this balance no longer exists. The other side has more and no financial constraints, because they’re probably not paying for it. In some cases they even set themselves up “parasitically” on free services. I’ve found some scans originating from GitHub Actions.

Although I’m not sure whether there’s any intelligence at work here. When I look at the patterns, an attempt is made at 12:00 to find out whether I have a WordPress blog, and the same thing again at 15:00. The probability that this has changed in the last 3 hours and I’ve suddenly switched to WordPress is low. I can’t tell from my log files that this is being taken into account.2

Of course, I could nail down all access paths so that hardly any scanner gets through. But this is a public blog. As such, by definition, it has to be accessible. The price of accessibility is the massive influx of requests that my web server configuration sends into 403 territory. My goal is not to prevent this access entirely, just to thin it out without taking on too high a risk of false positives or collateral damage.

And that’s exactly what the mechanism does. I’ve named the tool accordingly: apache-flyswatter. I can’t keep the flies out entirely if I want someone to come into the room, but if I see one, I can swat it.

How does this work in practice?

Now I’ve talked a lot about what I did. I’d like to briefly explain how I set it up.

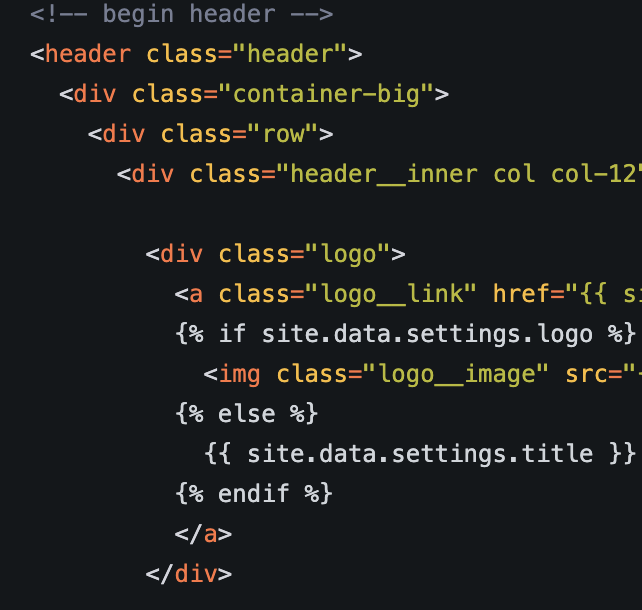

The detection side works as it did yesterday. I still use rewrite rules that “tag” certain requests with an environment variable.

RewriteCond %{REQUEST_URI} wlwmanifest\.xml$ [NC]

RewriteRule .* - [E=honeypot:1,F,L]

I use conditional logging here so that the tagged requests end up in the corresponding additional log files.

SetEnvIf REDIRECT_honeypot 1 honeypot

SetEnvIf Remote_Addr "^203\.0\.113\.1$" !honeypot

SetEnvIf Remote_Addr "^127\." !honeypot

SetEnvIf Remote_Addr "^::1$" !honeypot

LogFormat "%h %{%Y-%m-%dT%H:%M:%S%z}t \"%r\" %>s \"%{User-Agent}i\"" honeypot

CustomLog /var/log/apache2/honeypot.log honeypot env=honeypot

LogFormat "%t %a %{remote}p %A %{local}p" connlog

CustomLog /var/log/apache2/honeypot_connections.log connlog env=honeypot

I’m again working with the hypothesis that every access that lands in this log file is malicious, or at least questionable. There is no valid reason why these requests would be legitimate under intended use.

I have two log files. The first log file, /var/log/apache2/honeypot.log, was used by the old mechanism. It’s no longer used by any tool. I’ve kept it so I can manually check what kind of requests land there.

I would strongly recommend keeping it. You can also use the timestamp in the normal log to find out what kind of request it was. But if you have tail -f running on both /var/log/apache2/honeypot.log and /var/log/apache2/honeypot_connections.log in parallel, it’s significantly easier to quickly establish the correlation.

The log file I’m working with now contains different information:

- Timestamp

- Source IP

- Source port

- Destination IP

- Destination port

The new mechanism doesn’t need more information than that. This is the data the script needs to carry out the three steps.

You need three components:

- The config file

- the systemd unit

- the script

Download the three components to your system and install them:

install -m 0755 apache-flyswatter /usr/local/bin/apache-flyswatter

install -m 0644 apache-flyswatter.conf /etc/apache-flyswatter.conf

install -m 0644 apache-flyswatter.service /etc/systemd/system/apache-flyswatter.service

apt install nftables conntrack iproute2

systemctl daemon-reload

systemctl enable --now apache-flyswatter

If you already have the packages installed, that step isn’t necessary, of course. With journalctl -u apache-flyswatter -f you should now see the script doing its work.

root@v2202604350965450827:~# journalctl -u apache-flyswatter -f

Apr 18 20:00:26 v2202604350965450827 apache-flyswatter[2853]: 2026-04-18 20:00:26,868 INFO SWAT 203.0.113.254:23562 -> 159.195.145.249:443

Apr 18 20:05:31 v2202604350965450827 apache-flyswatter[2853]: 2026-04-18 20:05:31,861 INFO SWAT 203.0.113.254:42408 -> 159.195.145.249:443

Apr 18 20:16:52 v2202604350965450827 apache-flyswatter[2853]: 2026-04-18 20:16:52,293 INFO SWAT 203.0.113.254:50682 -> 159.195.145.249:443

If you run nft list ruleset, you’ll get the following output:

table inet flyswatter {

set blocked4 {

type ipv4_addr

flags timeout

elements = { aaa.bbb.ccc.ddd timeout 2m expires 1m30s900ms }

}

set blocked6 {

type ipv6_addr

flags timeout

}

chain input {

type filter hook input priority filter - 10; policy accept;

ip saddr @blocked4 tcp dport { 80, 443 } reject with tcp reset

ip6 saddr @blocked6 tcp dport { 80, 443 } reject with tcp reset

}

}

I’d like to draw your attention to the element in the blocked4 set. You can see that it’s an element with a timeout. The entries clean themselves up — nftables removes them automatically after the timeout expires. I don’t have to go around mopping up after the rules.

Observations

After running the script for a few hours now, the following became apparent:

- They come back. The scanners won’t be deterred.

- 120 seconds is too short to largely relieve the log files of scanner noise. You still frequently find scanning activity, but it gets shut down quickly3. However, in the config file you can set a significantly longer ban time. Possibly even the 24h used in yesterday’s solution.

- What’s missing are scan runs that fire off 200-300 requests within a short time. The mechanism seems to set limits on such activity.

- With some bots I’ve noticed that they appear to start their scan run from scratch again. After a certain time, the same request is executed again from the same IP address. As if they were working through a list, and after I’ve cut the connection, they start over from the beginning.

Postscript

I probably could have implemented all of this in fail2ban with nearly equivalent functionality. But I’m currently trying to find ways to assess IP addresses in terms of how high their risk of collateral damage is, because multiple users sit behind them. Such a mechanism would allow me to more safely increase the ban time significantly. I already have a few ideas. It’s easier for me to implement such logic in my own script. I’ll be reporting on this in the coming days.

-

This blog entry is a work-in-progress report. By the time you read it, the reaction component is probably a little more sophisticated than what’s described here. ↩

-

Maybe you really do need LLMs to avoid doing mass vulnerability scanning in the dumbest possible way. That would still be a script kiddie, but a script kiddie on steroids and with a sugar rush. ↩

-

Provided they use patterns that are caught by the detection component. ↩