At some point it also became clear to me that evaluating the result codes from all IPs (not what they loaded, only what the HTTP server thought of it) had, on the one hand, turned into a fairly substantial PII collection (which is why I kept it only in main memory) — one that under certain circumstances would have allowed me to “depseudonymize” the data in the pseudonymized logfile within the first 24h. Stopgap solution … yes. Final architecture … not really.

On top of that, this table was pretty annoying. Reboot the machine? Data gone. New daemon version? In most cases, data gone. I did learn a technique along the way that let me swap code on the fly, but that didn’t apply to data structures. I need a new field? Data gone! And once the data was gone, the reaction component lost a key data element. At some point a function had been added that checked whether the service had even been running long enough to have collected enough data. Which, due to changes, was rarely the case.

Beyond that, the signal was mostly fairly weak. Essentially the signal was only strong enough when an IP was making regular feed requests. The feed requests were a kind of heartbeat that the system monitored. My hypothesis was: a scanner doesn’t simulate a feed reader for hours on end.

For everyone else, the desired signal “there’s more behind this IP than just the abuser” was rather weak. Or to put it more precisely: in one direction it was indeed quite informative. If between hour -24 and hour -1 there were several valid accesses from an IP, I could assume it was probably a CGNAT, NAT or VPN. The reverse, however, didn’t hold — the number of accesses to my blog is simply too low for that. I think you’d need several orders of magnitude1 more traffic. The data was so thin that the statement “because I haven’t seen a 200 response, it’s not a NAT, CGNAT or VPN” would have been pure speculation. So this approach was of limited value to me.

Now, ideas usually don’t come to you in front of the computer, but on the toilet, while making tea, or in the shower. I had this one while reading: I’m looking at the wrong side of the ban. For me, the information about what happens after the ban would have considerably more value than the information about what happens before the ban.

In the previous solution I was hooked into the firewall log. So I knew that something was happening. A request had been rejected by the firewall. I knew from the rule what it was: HTTP or HTTPS. But I didn’t know what was inside it. My server didn’t either. It refused to have the conversation. I had no log of what the client would have liked to do.

Rebuild

With this thought in mind, I rebuilt the setup. I had originally come at this from the angle that all the noise in the logfiles annoyed me. So a new solution had to keep requests away from my web server just like an IP block does. What doesn’t reach the server doesn’t get logged.

But I don’t necessarily have to block the request entirely. I can also redirect it elsewhere. And that’s exactly what the new solution does. As before, the system hooks into the honeypot log. The detection component is Apache. As before, an IP address can only end up in this file by being associated with a request that violated a detection pattern, so that — also as before — every IP address in it is handed over to the reaction component.

However, there is now only one reason why an IP address gets blocked outright. If an IP comes from an ASN on Spamhaus’s “Filter-on-Sight” list, a reject ban is issued. Nothing good comes out of those gates. I don’t even want to know what comes out of there. And that’s straight away for seven days. For all practical purposes that’s a permanent ban, since on the next violation the ban gets extended by another 7d.

I didn’t want to make the ban permanent outright. If no traffic is coming from an IP — or in some cases a subnet — then I don’t need a filter rule for it. And the existence of the filter rule gives me some visibility that something is coming out of those networks, without me having to look more closely into the logfile. I can also see more quickly where a subnet ban is worthwhile and where it isn’t.

The decisive difference between this solution and the old one is that alongside the rejects there are also redirects. And a redirect is essentially the default response when hitting the honeypot. If you’ve triggered the detection rules, nftables redirects you internally from the original webserver2 to another web server3. Instead of port 80 you land on port 10080. Instead of port 443 you get internally redirected to port 100434. This means all further accesses from these IP addresses are no longer in the logfile of the primary server. So, my first requirement was met. Second, I now have an additional logfile that contains only the actions of banned users. I now know what happens after the ban.

What happened after the ban?

# cat c0d0s0.org-access.log-blocked | head -1

a:b:c:: - - [23/Apr/2026:05:25:30 +0200] "GET / HTTP/2.0" 301 854 "-" "curl/8.14.1"

# cat c0d0s0.org-access.log-blocked | tail -1

a.b.c.d - - [27/Apr/2026:05:14:05 +0200] "GET /setup.php HTTP/1.1" 404 14601 "-" "-"

The dataset covers about 4 days.

# wc -l c0d0s0.org-access.log-blocked

3208 c0d0s0.org-access.log-blocked

# wc -l honeypot.log-blocked

2098 honeypot.log-blocked

Based on this, 1100 requests landed on the ban web server that didn’t match the patterns in the detection component.

The vast majority of these accesses are, frankly, candidates for new filter patterns. There was only one IP address from which actual accesses to HTML files had taken place. There are clear indications, though, that this was just a camouflage pattern. But even if it were a genuine access, I’d consider a false-positive count of one IP acceptable. At least the first four days suggest a high specificity of the measures.

New detection logic

I took the opportunity to put the detection logic on a new footing as well.

Determining that something should become a 403, and actually making it a 403, are now separate functions.

A large set of SetEnvIfNoCase statements sets an environment variable. This contains a short identifier for the rule.

SetEnvIfNoCase Request_URI "/wp-[a-z-]+\.php" honeypot=S2-WP001

If this environment variable is set, a central rewrite rule kicks in that actually produces the 403.

RewriteCond %{ENV:honeypot} !^$

RewriteRule .* - [F,L]

Improvement in monitoring

This rebuild was necessary primarily because I wanted to introduce one significant change. The environment variable honeypot now contains a short identifier that names the rule. This means I can carry that information through into the log.

The last matching rule wins, not the one that fits best. Unfortunately I couldn’t get the correct content to reliably end up there within my tangle of RewriteRules. Sometimes it would say -, sometimes 1. Rarely did it contain the correct rule identifier. Only after the rewrite to SetEnvIfNoCase was the variable honeypot reliably set. Or so I thought. Here too I had to deal with the issue of redirects and the resulting renaming of environment variables. Internally, displaying the ErrorDocument is a redirect, which means the environment variables are renamed accordingly. I log both the variable honeypot and REDIRECT_honeypot. A somewhat brute-force solution, but it makes sure I get the information I want into the files.

LogFormat "%t %a %{remote}p %A %{local}p \"%r\" %{honeypot}e %{REDIRECT_honeypot}e" connlog

The flyswatter then assembles the correct reason for filtering from both:

2026-04-27 19:28:54,469 INFO ipapi check a.b.c.d: triggered (datacenter) -> extending ban to 86400s (redirect)

2026-04-27 19:28:54,541 INFO Verdict on a.b.c.d: REASON:S2-WP008 NOSELF NOFASTTRACK NOBADASN:9999999/NARF-ASN NOABUSE:32 IPAPI:datacenter NOGROUP NOPREBAN DURATION:1d/redirect

I can see right away which rule led to the ban: Section 2 — Wordpress rule 8.

# grep "S2-WP008" .htaccess

SetEnvIfNoCase Request_URI "(wp-conflg|setup-config|wp-setup|readme\.html|license\.txt)" honeypot=S2-WP008

Implementing the new logic on two servers

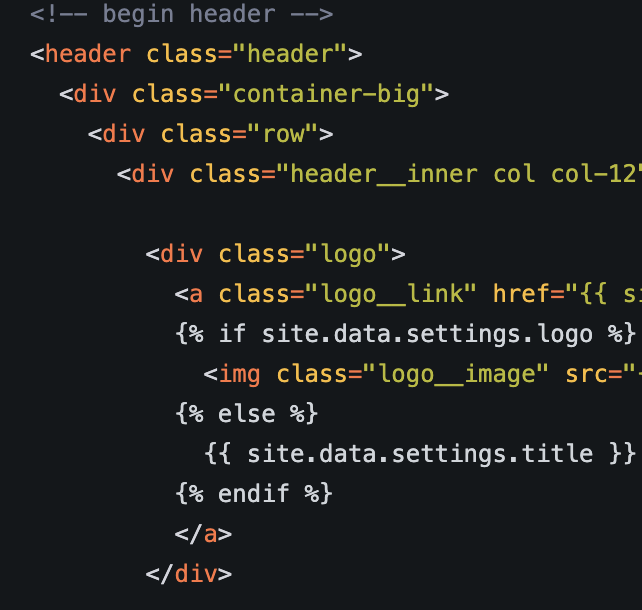

For this I have two .htaccess templates in my repository5. This version goes into the .htaccess of the primary server (reachable on 80, 443).

A slightly modified ruleset is meant for the .htaccess of the redirect server. Note in particular the second whitelist rul SetEnvIf Request_URI "^/notfound/". That’s where the always-served document I mentioned earlier lives. This second whitelist entry was needed to make sure the rewrite logic doesn’t fire on /notfound/index.html.

/notfound/index.html in turn is wired up via the ErrorDocument block.

ErrorDocument 404 /notfound/

ErrorDocument 403 /notfound/

ErrorDocument 410 /notfound/

Since there’s almost nothing else on the web server, practically every request becomes a 404 and the corresponding ErrorDocument is served. And because I’ve assigned the same ErrorDocument to 403 and 410, the redirect server only ever responds with this one document.

You could also just copy the .htaccess from the primary server into the redirect server and add the second whitelist rule. Since the redirect server is supposed to display exactly one page, please clear out all other rewrite rules and redirects in the process. Anything else leads to surprisingly hard-to-find problems (for example, the main web server’s site still being served).

Feeds

There is one exception: the feeds remain reachable even on the redirect server. So the server isn’t entirely contentless. My readership consumes this blog largely as an RSS feed. If for whatever reason a feed reader’s IP gets banned, they can still read the feeds. The collateral damage of a false-positive block is therefore considerably reduced.

I had previously considered building an exception for IPs that regularly poll the RSS feeds, until I noticed that it’s significantly simpler and more stable to just serve the feeds from the redirect server as well. That way I can also block an IP that scans and pulls feeds at the same time.

Additional information source

The previous version already queried an IP’s reputation (at AbuseIPDB) and issued a 1-day ban once a certain threshold was exceeded.

In this version another information source has been added. The system queries ipapi.is to learn more about those IP numbers that ended up in the honeypot (and only those). Part of the information this service provides is whether an IP is a VPN endpoint, a TOR exit node or a proxy. If that’s the case, the ban is shortened to 1 hour, since here the probability is highest that legitimate users may also be behind that IP. I built a caching daemon for the lookup. The free queries are limited and I want to stay within that budget.

That said: if the quota is exhausted, the worst that can happen is that all bans are issued at the default length of one day. The system is set up to fail conservatively at this point.

There are a number of additional conditions in my version of the script that shorten or extend a ban. I’ve removed those from the version in the Codeberg repository, because they work for me but not necessarily for anyone else. Feel free to go wild here.

Weakness

While I was at it, I found another weakness in this mechanism. A non-trivial number of honeypot requests still arrive at the primary server. This happens whenever the scanner works with high parallelism. For example, 16 requests come in at the same time, but the mechanism hasn’t fired yet because it’s hooked into the logfile and has to wait for something to appear there. The follow-up batches do then end up on the redirect server.

The response to these requests, however, is identical on the primary server and on the redirect server: 403 Forbidden for everything that’s known, 404 for everything that isn’t.

The access log of the redirect server is very tidy. Which is why a single command is also quite handy for distilling out new rules.

cat /var/log/apache2/c0d0s0.org-access.log-blocked | grep "404" | cut -d " " -f 7 | sort | uniq -c | sort -n

Anything that’s a 403 is already covered by rules. Anything that returns 200 is intentional. Anything that’s a 404 isn’t covered by the rules. It’s relatively easy to define rules from the rest.

Strictly speaking, this expansion isn’t necessary. From what I’ve observed, while individual requests may not be caught by the ruleset, scan runs almost always contain at least one pattern that is caught. And that’s enough to trigger the redirect. So not full coverage, but enough tripwires that one of them fires during typical scanner runs.

New version

I’ve uploaded a new version of the setup. You can find it with instructions here. The warning still applies that this is mostly vibecoded. So: caveat usor!6

Closing remark

I think this will be the last blog entry on this topic, because the experimenting — both with the idea itself and with vibecoding — has run its course. I’m still working through my conclusions on both fronts. But that will take a while before it makes it into its own post. That said, if a new idea on this topic hits me on the toilet or in the kitchen, I’ll definitely post about the implementation here.

-

In my opinion, “order of magnitude” is misused about as often as “quantum leap”. ↩

-

I will call this server “primary server”. ↩

-

I call it “redirect server” from now on. ↩

-

Yes, that was a typo. I just never bothered to fix it. ↩

-

I still need to restructure these so the ruleset isn’t duplicated. Later …. ↩

-

“Caveat utilitor” is probably more correct (my Latin is so far back that there were still native speakers around), but “caveat usor” sounds better … ↩