My last article was about the reading side of ZFS — but what about writing? As Ben correctly stated in his presentation about ZFS: Forget your good old UFS knowledge. ZFS is different. Especially writing data to disk is different from other filesystems. I want to concentrate on this part in this article. I simplified things a little for the ease of understanding. As usual, the source code is the authoritative source of information about this stuff.

Sync Writes vs. Async Writes

First of all: ZFS is a transactional filesystem. The way it writes data to the filesystem is a little bit more sophisticated. There are two different kinds of writes in an operating system: synchronous and asynchronous writes. The difference: When a synchronous write returns, the data is on disk. When an async write returns, the data is in the cache of the system, waiting for its real write. The most famous generators of sync writes are databases. In order to conform to the ACID paradigm, the database has to be sure that data written to it has actually been persisted to non-volatile media. But there are others: A mail server has to use sync writes to ensure no mail is lost in a power outage.

ZFS Transactions

The ZFS POSIX Layer (ZPL — the reason why ZFS looks like a POSIX-compliant filesystem to you) encapsulates all write operations (either metadata or real data) into transactions. The ZPL opens a transaction, puts some write calls in it, and commits it. But not every transaction is written to disk at once — they are collected into transaction groups.

ZFS Transaction Groups

All writes in ZFS are handled in transaction groups. Both synchronous writes and async writes are finally written this way to the hard disk. I will come back to the differences between both later in this article. The concept of writing data to the hard disk is largely based on the concept of transaction groups. At any time there are at most three transaction groups in use per pool:

- Open group: This is the one where the filesystem places new writes.

- Quiesce group: You can add no new writes to it; it waits until all write operations to it have ceased.

- Sync group: This is the group where the data is finally written to disk.

Working with a transaction group instead of single transactions gives you a nice advantage: You can bundle many transactions into a large write burst instead of many small ones, thus saving IOPS from your IOPS budget.

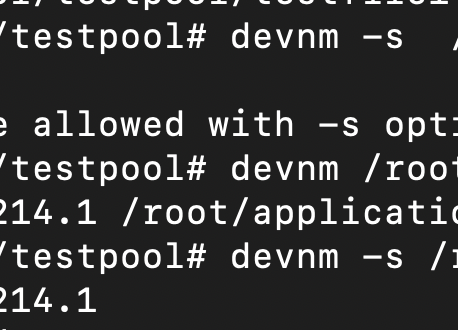

The Transaction Group Number

Throughout its lifecycle in the system, every transaction group has a unique number. Together with the uberblock, this number plays an important role in recovering after a crash. There is a transaction number stored in the uberblock. You have to know that the last operation in every sync of the transaction group is the update of the uberblock. It’s a single write block at the end. The uberblock is stored in a ring buffer of 128 blocks. The location of the uberblock is the transaction group number modulo 128. Finding the correct uberblock is easy: It’s the one with the highest transaction group number.

Many of you will ask: What happens when the power was interrupted while writing the uberblock? Well, there is an additional safeguard. The uberblock is protected with a checksum. So the valid uberblock is the one with the highest transaction group number and a correct checksum. Together with copy-on-write (as you don’t overwrite data, you don’t harm existing data, and when the uberblock has been written successfully to disk, the old state isn’t relevant anymore) and the ZIL, this ensures an always-consistent on-disk state.

Implementation in ZFS

There are actually three threads that control the writes in ZFS.

There is the txg_timelimit_thread(). This fires up at a configurable interval — the default is 5 seconds. This thread tries to move the open transaction group to the quiesce state. Tries? Yes — there can be at most one TXG in every state at a given time in a single pool. Thus, when the sync hasn’t completed, you can’t move a new TXG from quiesce to sync. When there is still a quiesced TXG, you can’t move an open TXG to the quiesce stage. In such a situation, ZFS blocks new write calls to give the storage a chance to catch up. This will happen when you issue write operations to your filesystem in larger amounts than your storage can handle.

Since last year, write throttling is implemented differently: it slows down write-intensive jobs a little (by a tick per write request) as soon as the write rate surpasses a certain limit. Furthermore, the system observes whether it can write outstanding data within the time limit defined by txg_timelimit_thread(). When you really want to dig into this issue and its solution, I recommend Roch’s blog article “The New ZFS Write Throttle”.

Let’s assume the TXG has moved from open to quiesce. This state is very important because of the transactional nature of ZFS. When an open TXG is moved to the quiesce state, it’s possible that it still contains transactions that aren’t committed. The txg_quiesce_thread() waits until all transactions are committed and then triggers the txg_sync_thread(). This is finally the thread that writes the data to non-volatile storage.

Where Is the Data Stored from Async Writes Until Syncing?

As long as the data isn’t synced to disk, all the data is kept in the Adaptive Replacement Cache. ZFS dirties the pages in cache and writes them to disk. They are stored in memory. Thus, when you lose memory, all async changes are lost. But that’s why they are async — for writes that can’t accept such a loss, you should use sync writes.

Sync Writes

As you may have recognized, ZFS writes only in large bursts every few seconds. But how can we maintain sync write semantics? We can’t keep data only in memory, as it would reside only in volatile memory (DRAM) for a few seconds. To enable sync writes, there is special handling in the ZFS Intent Log, or short: ZIL.

The ZIL

The ZIL keeps track of all write operations. Whenever you sync a filesystem or use the O_SYNC function call, the ZIL is written to a stable log device — for example, on the disks themselves. The ZIL contains enough information to replay all the changes in case of a power failure. The ZIL list contains the modified data itself (in the case of only a small amount of data) or contains a pointer to the data outside.

The latter allows a neat trick: When you write a large heap of data, the data is written to your pool directly. The block pointer is delivered to the ZIL list, and it’s part of the intent log. When the time comes to put this to stable storage (thus integrating it into the consistent on-disk state of your filesystem), the data isn’t copied from the ZIL to the pool. ZFS just uses the block pointer and simply reuses the already written data block.

Whenever the filesystem is unmounted, the ZIL on the stable media is fully processed to the filesystem and ZFS deletes it. When there is still a ZIL with active transactions at mount time, there must have been an unclean unmount of the filesystem. So ZFS replays the transactions in the ZIL up to the state when the last sync write call returned or when the last successful sync of the pool took place.

Separated ZIL

The separated ZIL is an interesting twist to the story of handling the ZIL. Instead of writing the ZIL blocks onto the data disks, you separate them onto a different medium. This has two advantages: First, the sync writes don’t eat away IOPS from the IOPS budget of your storage (it can even make sense to use rotating rust disks for this task). Additionally, you can use SSDs for this task. The advantage: A sync write operation has to wait for the successful write to the disk. A magnetic rust disk has a much higher latency than a flash disk. Thus, separating the ZIL to an SSD while keeping your normal storage on rotating rust gives you both advantages: the incredibly low write latency of SSDs and the cheap, vast amounts of magnetic disk storage.

But one thing is important: The separated ZIL only solves one problem — the latency of synchronous writes. It doesn’t help with insufficient bandwidth to your disk. It doesn’t help you with reads (well, that’s not entirely true: fewer IOPS for writes lead to more IOPS for reads, but that’s a corner case). However, write latency is an issue everywhere: iSCSI target writes to disk are synchronous by default. Most write operations of databases are synchronous. Metadata updates are synchronous. So in these cases, an SSD can really help. Or to be more exact: Whenever a separated ZIL has a lower latency than the primary storage, a ZIL medium is a good idea. So for local SAS, FC, or SATA storage, an SSD is a good idea; for remote storage (like iSCSI over WAN), one or two rotating rust disks can achieve similar results.

Conclusion

ZFS goes a long way to make write operations as efficient as possible. The internal architecture of ZFS makes some technologies feasible that are impossible or hard to implement with other filesystems. And most importantly: The always-consistent state on disk is largely based on the transactions in conjunction with copy-on-write. (Yes, I know it’s more like redirect-on-write, Dirk. 😉)